PolyU develops novel multi-modal agent to facilitate long video understanding by AI, accelerating development of generative AI-assisted video analysis

HONG KONG SAR - Media OutReach Newswire - 10 June 2025 - While Artificial Intelligence (AI) technology is evolving rapidly, AI models still struggle with understanding long videos. A research team from The Hong Kong Polytechnic University (PolyU) has developed a novel video-language agent, VideoMind, that enables AI models to perform long video reasoning and question-answering tasks by emulating humans' way of thinking.

The VideoMind framework incorporates an innovative Chain-of-Low-Rank Adaptation (LoRA) strategy to reduce the demand for computational resources and power, advancing the application of generative AI in video analysis. The findings have been submitted to the world-leading AI conferences.

Videos, especially those longer than 15 minutes, carry information that unfolds over time, such as the sequence of events, causality, coherence and scene transitions. To understand the video content, AI models therefore need not only to identify the objects present, but also take into account how they change throughout the video. As visuals in videos occupy a large number of tokens, video understanding requires vast amounts of computing capacity and memory, making it difficult for AI models to process long videos.

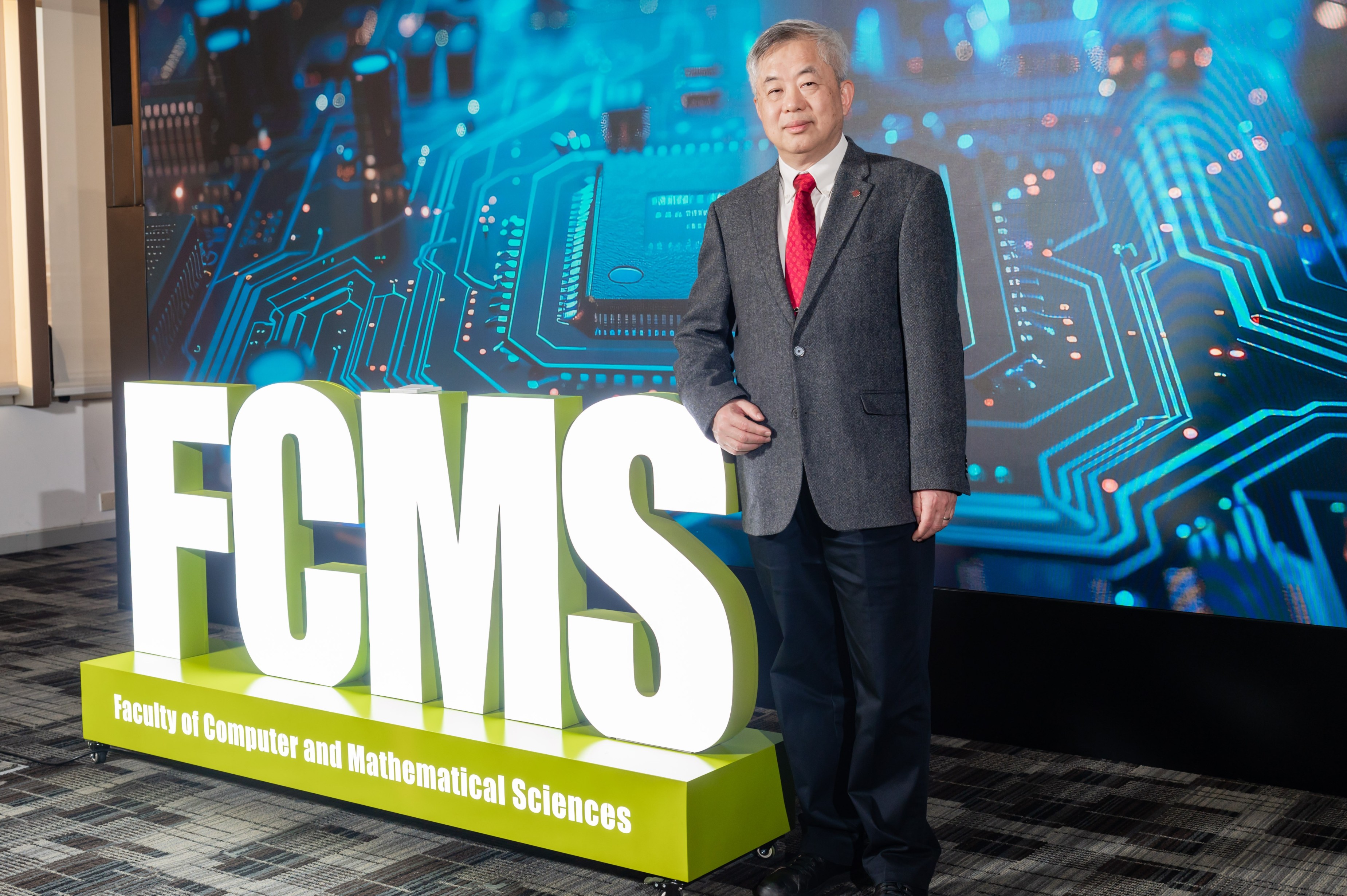

Prof. Changwen CHEN, Interim Dean of the PolyU Faculty of Computer and Mathematical Sciences and Chair Professor of Visual Computing, and his team have achieved a breakthrough in research on long video reasoning by AI. In designing VideoMind, they made reference to a human-like process of video understanding, and introduced a role-based workflow. The four roles included in the framework are: the Planner, to coordinate all other roles for each query; the Grounder, to localise and retrieve relevant moments; the Verifier, to validate the information accuracy of the retrieved moments and select the most reliable one; and the Answerer, to generate the query-aware answer. This progressive approach to video understanding helps address the challenge of temporal-grounded reasoning that most AI models face.

Another core innovation of the VideoMind framework lies in its adoption of a Chain-of-LoRA strategy. LoRA is a finetuning technique emerged in recent years. It adapts AI models for specific uses without performing full-parameter retraining. The innovative chain-of-LoRA strategy pioneered by the team involves applying four lightweight LoRA adapters in a unified model, each of which is designed for calling a specific role. With this strategy, the model can dynamically activate role-specific LoRA adapters during inference via self-calling to seamlessly switch among these roles, eliminating the need and cost of deploying multiple models while enhancing the efficiency and flexibility of the single model.

VideoMind is open source on GitHub and Huggingface. Details of the experiments conducted to evaluate its effectiveness in temporal-grounded video understanding across 14 diverse benchmarks are also available. Comparing VideoMind with some state-of-the-art AI models, including GPT-4o and Gemini 1.5 Pro, the researchers found that the grounding accuracy of VideoMind outperformed all competitors in challenging tasks involving videos with an average duration of 27 minutes. Notably, the team included two versions of VideoMind in the experiments: one with a smaller, 2 billion (2B) parameter model, and another with a bigger, 7 billion (7B) parameter model. The results showed that, even at the 2B size, VideoMind still yielded performance comparable with many of the other 7B size models.

Prof. Chen said, "Humans switch among different thinking modes when understanding videos: breaking down tasks, identifying relevant moments, revisiting these to confirm details and synthesising their observations into coherent answers. The process is very efficient with the human brain using only about 25 watts of power, which is about a million times lower than that of a supercomputer with equivalent computing power. Inspired by this, we designed the role-based workflow that allows AI to understand videos like human, while leveraging the chain-of-LoRA strategy to minimise the need for computing power and memory in this process."

AI is at the core of global technological development. The advancement of AI models is however constrained by insufficient computing power and excessive power consumption. Built upon a unified, open-source model Qwen2-VL and augmented with additional optimisation tools, the VideoMind framework has lowered the technological cost and the threshold for deployment, offering a feasible solution to the bottleneck of reducing power consumption in AI models.

Prof. Chen added, "VideoMind not only overcomes the performance limitations of AI models in video processing, but also serves as a modular, scalable and interpretable multimodal reasoning framework. We envision that it will expand the application of generative AI to various areas, such as intelligent surveillance, sports and entertainment video analysis, video search engines and more."

Hashtag: #PolyU #AI #LLMs #VideoAnalysis #IntelligentSurveillance #VideoSearch

The issuer is solely responsible for the content of this announcement.